Can trust, one of the primary bases of relationships throughout history, be quantified and measured?

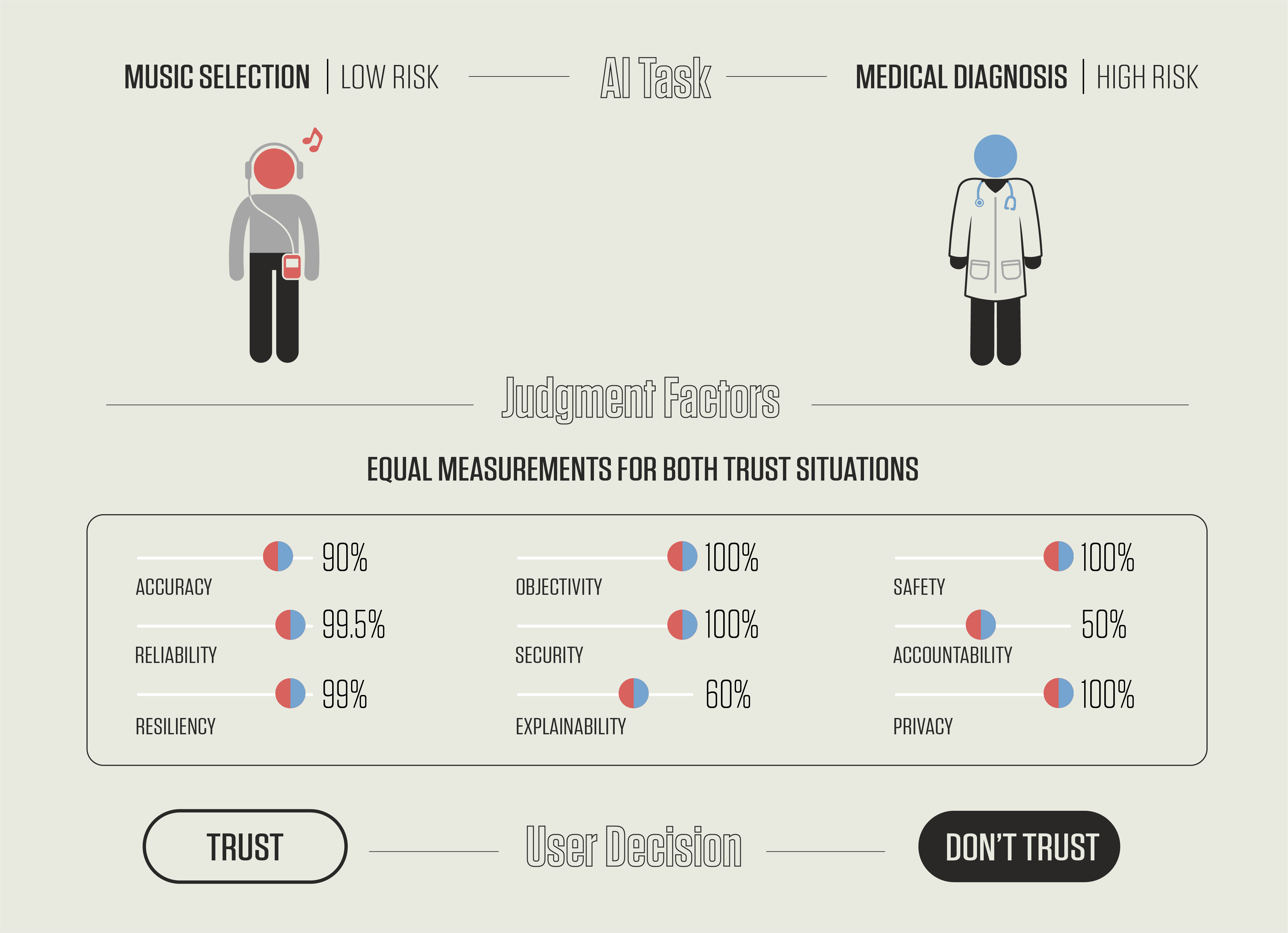

Every time you speak to a virtual assistant on your smartphone, you are talking to an artificial intelligence — an AI that can, for example, learn your taste in music and make song recommendations that improve based on your interactions. However, AI also assists us with more risk-fraught activities, such as helping doctors diagnose cancer. These are two very different scenarios, but the same issue permeates both: How do we humans decide whether or not to trust a machine’s recommendations?

This is the question that a new draft publication from the National Institute of Standards and Technology (NIST) poses, with the goal of stimulating a discussion about how humans trust AI systems. The document, Artificial Intelligence and User Trust (NISTIR 8332), is open for public comment until July 30, 2021. Read More